Advanced ixml Hands On

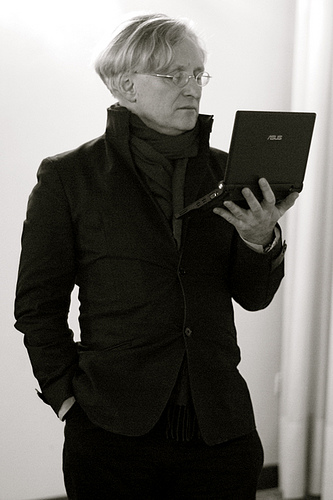

Steven Pemberton, CWI, Amsterdam

I assume you already know what ixml is. In fact that is a condition of attending this tutorial!

Follow along at http://www.cwi.nl/~steven/ixml/advanced/

This is the first time I have given this tutorial, and I anticipate we won't have enough time to do it all today. But fear not! The tutorial is also designed for self-teaching, and these slides are also online.

As with the introductory tutorial, the structure is:

tutorial: Steven++Exercise.

Example answers to all the exercises are at the end.

Please ask questions, and give feedback!

This year you have a choice of two zero-install implementations, both accessed via a browser.

John's is much faster; mine is better when it comes to ambiguity.

rule: a, "b", c;

"d", e, "f". {comment}

Terminals match a literal string:

Literals:

"terminal" 'terminal' "don't" 'don''t'

Encoded:#fff

Character sets match a single character:

Inclusion:

["a"; "()+"; #fff; "A"-"Z"]

Exclusion:~["a"; "()+"; #fff; "A"-"Z"]

Insertions match nothing, only appear in the output:

+"string" +#fff

Group : (a, "b", c; "d", e, "f")

Option: a?

Repetitions:

Zero or more:

a*

One or more:a+

Zero or more with separator:a**b

One or more with separator:a++b

Serialisation markers:

-do not include in serialisationdo include in serialisation (default)

^serialise nonterminal as attribute

@

In Finnish, dates are written like:

21. syyskuuta 2022

The Finnish names for months as used in dates are:

"tammikuuta"; "helmikuuta"; "maaliskuuta"; "huhtikuuta"; "toukokuuta"; "kesäkuuta"; "heinäkuuta"; "elokuuta"; "syyskuuta"; "lokakuuta"; "marraskuuta"; "joulukuuta".

Write ixml to read Finnish dates and serialise them as:

<date> <day>21</day> <month>9</month> <year>2022</year> </date>

(Hint: use insertions)

By default, all characters in the input end up as character content in the serialised XML. For instance,

i:=0

might yield

<statement>

<assignment>

<id>i</id>:=<expr><number>0</number></expr>

</assignment>

</statement>

Effectively, all ixml is doing is putting tags round parts of the input to expose its structure.

There are some advantages to this: you lose no information: only gain it; and to get the input back, you just concatenate the character content.

An option is to delete terminals that don't add any information. In the

above example, we know it's an assignment, so the := adds no

information. On the other hand the i and 0 are both

essential to the meaning.

assignment: id, -":=", expression.

giving

<statement>

<assignment>

<id>i</id><expr><number>0</number></expr>

</assignment>

</statement>

Another option is to put all your meaningful characters into attributes. If you also delete your non-essential characters, elements then contain no character content, only other elements. One advantage of this approach is that you don't have any fight with the XML whitespace rules (and thus are free to pretty-print your XML).

assignment: id, -":=", expression. expression: ...; number. id: name. @name: [L]+. number: value. @value: -digit+.

giving

<statement>

<assignment>

<id name="i"/><expr><number value="0"/></expr>

</assignment>

</statement>

One thing to watch out for though is that you can't have more than one attribute of a particular name.

Attributes lose structure. If you specify that a nonterminal is to be serialised as an attribute, then all non-deleted terminals under that nonterminal get concatenated to form the attribute. For instance with:

<web>

<IRI>

<scheme>http</scheme>

<host>

<domain>www</domain>

<domain>w3</domain>

<domain>org</domain>

</host>

<path>

<segment>2002</segment>

<segment>xforms</segment>

</path>

</IRI>

</web>

turning IRI into an attribute would give:

<web IRI="httpwwww3org2002xforms"/>

<web IRI="httpwwww3org2002xforms"/>

so in that case, you definitely shouldn't delete the non-essential terminals:

<web IRI="http://www.w3.org/2002/xforms"/>

It may not matter to you that the structure has been lost: it depends on what you will be doing with the output, but if it means that it will have to parsed again, it might be a better idea to retain the structure.

Note that in creating the content of an attribute, only the terminals are used, so the marks on the contained nonterminals are ignored.

Default: everything is serialised as an element.

Override, either by marking the rule definition:

-subtype: @name.

or its use:

pointer: -"pointer to ", -subtype.

Which should you choose?

Good practice is to specify the default on the rule, overrides at use.

You can then see at a glance which elements can occur in a serialisation, just by looking at the names of the rules.

There is of course the possibility of marking both definition and use, but that makes life harder if you decide to change it.

Don't forget that if a rule is marked as deleted or an attribute, you can

override that with ^. For instance, with

-subtype: @name.

pointer: -"pointer to ", ^subtype.

subtype would appear as an element in its use in

pointer.

Wikipedia has a markup language for pages, that includes the following notation for links:

[[link|Text Content]]

which produces a result like:

<a href="/wiki/link">Text Content</a>

Write some ixml to do this.

There are three main types of nonterminal:

statement,

assignment, id, expr, and

number earlier), To show all three in action, let's take as example very simple arithmetic expressions. To keep it super simple, we shall have addition, multiplication, and bracketing, of numbers and identifiers.

At a syntactic level, expressions are just compositions of operands and operators:

expression: operand++operator.

operator: "+"; "×".

operand: id; number; "(", expression, ")".

id: [L]+.

number: ["0"-"9"]+.

Note operand is a refinement: it gathers the three types of

operand together. With

a+2×b

we get

<expression>

<operand>

<id>a</id>

</operand>

<operator>+</operator>

<operand>

<number>2</number>

</operand>

<operator>×</operator>

<operand>

<id>b</id>

</operand>

</expression>

Clearly we can delete the operand tags:

-operand: id; number; "(", expression, ")".

to give

<expression> <id>a</id> <operator>+</operator> <number>2</number> <operator>×</operator> <id>b</id> </expression>

The operator element on the other hand serves a necessary

purpose, and should remain.

At a semantic level, multiplication has to be done before addition. We add some structural elements. Traditionally:

expression: sum. sum: expression, "+", product; product. product: product, "×", operand; operand.

so operands of + are products: multiplications are done first. This gives

for a+2×b:

<expression>

<sum>

<expression>

<sum>

<product>

<id>a</id>

</product>

</sum>

</expression>+

<product>

<product>

<number>2</number>

</product>×

<id>b</id>

</product>

</sum>

</expression>

This now at least gives the right structure, but at the cost of adding several layers of elements that add nothing to the understanding.

Ideally, we want

<expression>

<sum>

<id>a</id>+

<product>

<number>2</number>×

<id>b</id>

</product>

</sum>

</expression>

In other words, we want a sum element only if there is an

actual + involved.

Similarly, we only want a product element if there is a ×

involved.

So in the rule for sum:

sum: expression, "+", product; product.

it may be that the operand of + is not a product. So let us call the operand of a sum a term:

sum: expression, "+", term.

A term is either a product, or an operand:

term: product; operand.

Since a sum is now only when a + is involved, we also have to add term to the rule for expression:

expression: sum; term.

Now using the same argument for product:

product: term, "×", operand.

Since term is only there for structural reasons, and has no semantic role, we can delete it:

-term: product; operand.

Applying this to our input, we now get:

<expression>

<sum>

<id>a</id>+

<product>

<number>2</number>×

<id>b</id>

</product>

</sum>

</expression>

The whole grammar now looks like:

expression: sum; term.

sum: -expression, "+", term.

-term: product; operand.

product: term, "×", operand.

-operand: id; number; "(", expression, ")".

id: [L]+.

number: ["0"-"9"]+.

One last observation. With a bracketed sub-expression like

a×(2+b), we get:

<expression>

<product>

<id>a</id>×(

<expression>

<sum>

<number>2</number>+

<id>b</id>

</sum>

</expression>)

</product>

</expression>

This inner expression element clearly adds nothing, so we change the rule for bracketed expressions to

-operand: id; number; "(", -expression, ")".

to give

<expression>

<product>

<id>a</id>×(

<sum>

<number>2</number>+

<id>b</id>

</sum>)

</product>

</expression>

The grammar is here. Delete unwanted terminals, and add a rule

for comparison that takes two expressions and compares them with

the operators <, =, >,

≠, ≤, and ≥.

Imagine a programming language with type declarations:

type pointer to integer: refint.

We could write an ixml grammar that included this:

type-declaration: -"type ", type, -": ", name, -".".

type: {...;} pointer; name.

pointer: -"pointer to ", subtype.

subtype: name.

name: [L]+.

which would produce

<type-declaration>

<type>

<pointer>

<subtype>

<name>integer</name>

</subtype>

</pointer>

</type>

<name>refint</name>

</type-declaration>

If we then made the name of the type in the type-declaration an attribute

type-declaration: -"type ", type, -": ", @name, -".".

we would get

<type-declaration name='refint'>

<type>

<pointer>

<subtype>

<name>integer</name>

</subtype>

</pointer>

</type>

</type-declaration>

What you see here is that the type name has ended up earlier in the serialisation than the type it defines.

However, this can be useful. For instance, with

comparison: expression, compare, expression. compare: ["<=>≠≤≥"].

with input

a≤0

might give

<comparison>

<expression>

<id>a</id>

</expression>

<compare>≤</compare>

<expression>

<number>0</number>

</expression>

</comparison>

If we change the compare rule to

@compare: ["<=>≠≤≥"].

we would get

<comparison compare="≤">

<expression>

<id>a</id>

</expression>

<expression>

<number>0</number>

</expression>

</comparison>

making later processing potentially easier.

Consider the subtype rule

subtype: name.

giving:

<type-declaration name='refint'>

<type>

<pointer>

<subtype>

<name>integer</name>

</subtype>

</pointer>

</type>

</type-declaration>

If we now change name to an attribute, we get:

Consider the subtype rule

subtype: @name.

giving:

<type-declaration name='refint'>

<type>

<pointer>

<subtype name='integer'/>

</pointer>

</type>

</type-declaration>

If we then made subtype a deleted nonterminal:

Consider the subtype rule

-subtype: @name.

giving:

<type-declaration name='refint'>

<type>

<pointer name='integer'/>

</type>

</type-declaration>

The name attribute hasn't got an element to be an attribute on,

and so moves up to the nearest non-deleted nonterminal.

Take the earlier grammar for expressions, and make the comparison operator an attribute.

Then instead of

sum: -expression, "+", term. product: term, "×", operand.

use repetitions, like:

sum: term, "+", term++"+".

How does this change the resulting serialisation? For instance, compare the

result for "a+b+c" in the two versions.

When developing ixml grammars, you will regularly be confronted with ambiguity, where the system can parse the input in more than one way.

In some cases the content really is ambiguous, but in most cases, it will be a problem in the ixml.

You may not care that your grammar is ambiguous, as long as you get a result, but

Ambiguity can occur for several reasons, for instance:

With the ixml:

specification: "{", rule*, "}".

rule: definition*.

definition: id, "=", value.

an empty specification:

{}

could be zero rules, or one rule of zero definitions.

Fix it by making one of them a +, depending on your needs.

rule: definition*; reference. reference: id?.

In this case, rule can be empty in two different ways.

Another case is expressing a number that can be from 1 to 4 digits:

d4: d, d?, d?, d?.

The intention here is allow 1, 2, 3, or 4 digits, but unfortunately with

input like 123, ixml can't determine which d is

optional; is it 1?23, 12?3, or 123? ?

To make it unambiguous, you have to phrase it as

d4: d, (d, (d, d?)?)?.

To understand this better, see it as a contraction of

d4: d, d3?. -d3: d, d2?. -d2: d, d?.

We will treat this in more detail shortly, but if you have a rule like

set: start, numbers, end.

start: spaces, "{", spaces.

numbers: number**";".

end: spaces, "}", spaces.

spaces: " "*.

where the intention is to allow spaces on either side of the braces, in a case like

{ }

(where there is one space between the braces), ixml doesn't know whether this space is after the open brace, or before the close brace.

For instance:

input: line+. line: ~[]*, #a.

with the intention of meaning "any number of characters ending with a

newline". The problem here is that ~[] includes #a,

so that

1 2 3

could be interpreted as a single line [1 2 3], as two, in two

different ways: [1 2] [3], or [1] [2 3] or as 3

(which was the intention) [1] [2] [3].

The correct way to express this would exclude #a:

line: ~[#a]*, #a.

It's worth pointing out that ambiguity is a property of the input. For instance in the spaces case above, if you deleted all spaces:

-spaces: -" "*.

then all serialisations would turn out identically. But there would still be multiple possible serialisations, and ixml will still report that.

Ambiguous grammars run slower: the system has to process (parts of) the input repeatedly.

Write rules and alternatives so that is is clear which apply as early as possible. For instance

d4: d, (d, (d, d?)?)?.

is better than

d4: (((d?, d)?, d)?, d.

i.e "A digit optionally followed by 3 digits" is faster than "Optionally three digits followed by a digit" because the system knows faster that the rule may apply if it finds a digit.

Similarly, to reduce reprocessing input, factor out identical starts:

thing: "(", expression, ")";

"(", declaration, ")".

⇒

thing: "(", (expression; declaration), ")".

The input is a string of digits and letters. There are no spaces. Split it into words and numbers.

abcd1234defg5678hijk

should give something like

<input> <word>abcd</word> <number>1234</number> <word>defg</word> <number>5678</number> <word>hijk</word> </input>

The exercise at the end of the last section is made harder if you are used to working with regular expressions. That is because you may be used to something like

["a"-"z"]*

being greedy. That is to say, most regular expression software matches the longest possible string that matches the regular expression.

However, grammars are not regular expressions, which makes grammars more expressive, but means you have to watch out for your regular expression idioms when using ixml.

A typical mistake for the previous exercise is to do something like:

input: (word; number)+. word: [L]+. number: ["0"-"9"]+.

The problem with this definition is that it also allows two words to be adjacent to each other; and two numbers likewise. So with the input

ab12

This could be a word and a number, or two words and a number, or a word and two numbers, or two words and two numbers. Longer strings would be even more ambiguous.

The essential problem that is often the cause of such ambiguity is that there are nested repetitions with nothing in between.

One way to disambiguate this, is to ensure that two words can never be adjacent, and likewise numbers:

input: wordnumber; numberword. -wordnumber: word, numberword?. -numberword: number, wordnumber?. word: [L]+. number: ["0"-"9"]+.

Another option is to observe that the input is either a series of words separated by numbers, followed optionally by a number, or a series of numbers separated by words, followed optionally by a word:

input: word++number, number?;

number++word, word?.

If words and numbers were separated by spaces or punctuation, this problem wouldn't arise, and you could write:

input: (word; number)++", ".

but that was the point of this exercise.

A similar mistake is something like this, for input consisting of a number of lines, each line starting with some spaces, and some content:

input: line+. line: spaces, content, #a. spaces: " "*. content: ~[#a]*.

The problem is that the author was expecting " "* to match a

maximal number of spaces, but spaces can match both that and ~[#a]

in the rule for content, and so is ambiguous. To achieve what was

required you have to write:

content: ~[" "; #a], ~[#a]*.

In other words, the first character of the content mustn't be a space.

Add a third class, such as punctuation, with input like

...abcd()1234!!!

giving

<input> <punc>...</punc> <word>abcd</word> <punc>()</punc> <number>1234</number> <punc>!!!</punc> </input>

Hint: there is a Unicode character class P for punctuation.

Some formats are fixed-format: every character has to be in a certain place in the input; but others are free-format, which usually means that whitespace such as spaces and newlines may be freely used.

In the earlier example of types in an imagined language, to simplify the examples, spaces were included in the literals, making it fixed-format:

type-declaration: -"type ", type, -": ", @name, -".". pointer: -"pointer to ", subtype.

But to make the input language more flexible, we should be explicit about where spaces are allowed, and allow more than one space.

type-declaration: -"type", s, type, -":", s?, @name, -".", s. pointer: -"pointer", s, "to", s, subtype. s: -" "+.

You can see where spaces are required, and where optional.

And you can also allow other sorts of whitespace, such as tabs and newlines:

s: -[" "; #9{tab}; #d{carriage return}; #a{linefeed}]+.

When adding spacing, it is important to be consistent. The best advice is to put them as close as possible to the terminals, and after them, rather than before, as illustrated above:

Normally, you won't want to retain spaces in the output, since spaces are normally used to separate items, and don't hold any semantic value (the notable exception being in strings.)

If you want to retain a single space, you can do something like:

name: firstname, " ", -" "*, lastname.

although another option is

name: firstname, s, +" ", lastname.

Add spaces to the grammar for comparisons and expressions. (The output should be exactly the same).

If a symbol consists of several characters, it is easy in ixml, you just put the characters there in the rule:

assignment: identifier, ":=", expression.

However, it needs a little care if a multicharacter symbol is used to end a repetition, where parts of the symbol may occur in the repeated part.

We're going to throw you in the deep end, and let you try and solve such a problem.

If comments weren't nestable in ixml, you could define them as any number of characters that aren't the closing brace:

comment: "{", ~["}"]*, "}".

On the other hand, in Pascal, comments are enclosed in (* and

*). For instance these are all valid comments (the last line is

two comments). You should write ixml to produce output like that given.

(**) (***) (****) (*****) (*)*) (*))*) (*(*) (*abc*) (**abc*) (*abc**) (*abc*abc*) (*(abc)*(abc)*) (**abc*abc*) (*abc**abc*) (*abc*abc**) (*abc* )(*abc*) (*abc*)(*abc*)

<comments> <comment/> <comment>*</comment> <comment>**</comment> <comment>***</comment> <comment>)</comment> <comment>))</comment> <comment>(</comment> <comment>abc</comment> <comment>*abc</comment> <comment>abc*</comment <comment>abc*abc</comment> <comment>*abc*abc</comment> <comment>abc**abc</comment> <comment>abc*abc*</comment> <comment>abc* )(*abc</comment> <comment>abc</comment> <comment>abc</comment> </comments>

Hint: there are several ways to do this, but it is possible to do it using a top-level rule of:

comment: -"(*", -content, -"*)".

The observation is that ")" may occur in a comment, but only if it is not preceded by "*".

So we disallow ")" except when it is preceded by a character other than "*" (which includes when it is at the beginning of a comment, in other words preceded by nothing).

comment: -"(*", content, -"*)".

-content: ")"?, c*.

-c: ~[")"];

~["*"], ")".

Can you see why it wouldn't work if we said "*" may occur in a comment, except when it is followed by ")"?

Some languages are "context-free", which means you can process them in ixml without knowledge of different parts of the input. For instance, "A string of a's followed by the same number of b's" is context free:

ab: "ab"; "a", ab, "b".

This can process ab or aabb or

aaabbb, and so on to an unlimited depth.

However, "a string of a's, followed by the same number of b's, and then the same number of c's" is not context-free. To handle that in ixml you'd have to use a permissive grammar that assumed the input was correct, or otherwise one that restricted the depth you could handle:

ab: "abc";

"aabbcc";

"aaabbbccc";

...

to whatever depth you wanted to accept.

Another example of the contrast is in nested lists. A list like

(Monday(tea coffee) Tuesday Wednesday(morning(coffee biscuits) afternoon(tea cakes)) Thursday(closed))

where the nesting start and end is made explicit with brackets is context-free: you can write ixml that could process this to an unlimited depth of nesting.

Semantically equivalent forms like these are not context-free.

While you can write ixml to process them, you can only process them to a fixed depth of nesting.

Write ixml to handle one of these two forms of nested list.

0 Monday 1 tea 1 coffee 0 Tuesday 0 Wednesday 1 morning 2 coffee 2 biscuits 1 afternoon 2 tea 2 cakes 0 Thursday 1 closed

Monday

tea

coffee

Tuesday

Wednesday

morning

coffee

biscuits

afternoon

tea

cakes

Thursday

closed

One thing to be aware of is that there is no implied ordering in the alternatives of a rule, and there is no equivalent of an else: each alternative has to be explicit in what it accepts. In a rule like:

a: b; c; d.

this is not saying "Try b, then c, then

d". The alternatives are effectively (and in some implementations,

actually) processed in parallel, and furthermore all alternatives that match

are accepted (which is when you have ambiguity).

A simple programming language has simple statements:

id := expression id(expression, expression, ...)

and compound statements

if comparison then statement if comparison then statement else statement while comparison do statement begin statement statement ... end

Write ixml to accept this input. Don't forget spaces.

You can use the expression grammar from earlier, or something very simple, such as just identifiers and numbers.

What happens if you have as input "begin := 0"?

ixml was explicitly designed not to be about producing particular versions of XML, but for turning non-XML formats into XML, which can then be treated further in the extensive XML toolchain if a particular version of an XML vocabulary is desired.

That notwithstanding, it is occasionally possible to design a format that is treatable with ixml to produce (close to) an existing XML format (for instance Docbook bibliographies), or to transform an existing format and turn it into an existing XML format (for instance Markdown→XHTML).

So there are two complementary questions that arise:

The first question was mostly addressed in the earlier sections: you are constrained by the input format, you have to discover the concepts you want represented in the output, which characters go in elements, which if any in attributes, and which characters should be deleted.

The second question is constrained by the output format, which very much drives the structure of the ixml.

Every element and attribute has a non-hidden rule.

Elements that have the same name must have the same format.

You can add characters on the input to help drive the selection of elements, and those characters should be deleted on the output.

For instance, if you were designing a textual format for XHTML, you might

say an h1 starts with =, and h2 with

==, and so on:

h1: -"=", text. h2: -"==", text. -text: ~[#a]+, -#a.

XForms has a select1 control for defining a control (such as a

drop-down menu). You can actually see two of them in use at the top of the

tutorial for selecting chapters.

<select1 ref="result">

<label>Currency</label>

<item>

<value>EUR</value>

<label>Euro</label>

</item>

<item>

<value>GBP</value>

<label>Great Britain Pound</label>

</item>

<item>

<value>USD</value>

<label>United States Dollar</label>

</item>

</select1>

For simplicity, assume the ref attribute is just a word (it is

actually an XPath expression), a value is a string of characters

without spaces, and a label is a string of characters. Design a

textual markup with matching ixml to produce the above output.

Traditionally, syntax descriptions are used to describe what is acceptable or correct, but are not particularly interested in presenting any particular structure.

On the other hand, with ixml it is principally the structure we are interested in, and to a lesser extent the correctness of the input (since in many cases we can assume that the input is already correct).

To this end we can talk about Permissive and Strict descriptions: whether we assume the input is correct or not.

For instance, an IPv4 address is a 32 bit number represented by splitting it into 4 groups of 8 bits, each group represented by an (up to) 3 digit decimal number, such as

192.168.1.2

The most permissive description of this would be:

IPv4: (["0"-"9"]+)++".".

If we wanted to be somewhat stricter:

IPv4: d3, ".", d3, ".", d3, ".", d3. d3: d, (d, d?)?. d: ["0"-"9"].

If we know that the input is going to be correct, then this is fine; or we could check that the values are in range after serialisation.

If we want to be yet stricter, then we can restrict the values of

d3 to the range 0-255:

d3: d; { 0- 9}

["1"-"9"], d; { 10- 99}

"1", d, d; {100-199}

"2", ["0"-"4"], d; {200-249}

"25", ["0"-"5"]. {250-255}

We can adopt a similar permissive/strict approach to dates like

31/12/2022, either accepting any range of digits

date: d, d, "/", d, d, "/", d, d, d, d.

or restricting the values suitably.

This is a 128 bit number, represented in 8 groups of 16 bits, separated by colons.

Each group is represented by up to 4 hexadecimal digits. For instance:

2001:0db8:85a3:0000:0000:8a2e:0370:7334

This is easy in ixml. If we decide not to restrict it to 8 groups, we can say:

IPv6: h4++-":". h4: h, h, h, h. -h: ["0"-"9"; "a"-"f"; "A"-"F"].

Leading zeros can be omitted, but at least one digit must remain:

2001:db8:85a3:0:0:8a2e:370:7334

So we define:

h4: h, (h, (h, h?)?)?.

The left-most longest string of zero values may be replaced by

:: :

2001:db8:85a3::8a2e:370:7334

which we could represent with

IPv6: h4**-":", zeros, h4**-":". zeros: -"::".

The nice thing about doing it this way, is the semantic value of having an

element representing the missing values, 2001:DB8::1 giving:

<ipv6>

<h4>2001</h4>

<h4>DB8</h4>

<zeros/>

<h4>1</h4>

</ipv6>

The last two groups may be replaced by an IPv4-style value

2001:db8:85a3::8a2e:3.112.115.52

Add this.

Harder: make it strict, so that there are exactly 8 groups if the "::" form is not used, and less than 8 groups if it is used.

CSV, "Comma separated values" is a spreadsheet-derived data format, with no formal definition, but that is so often used, is worth having an ixml grammar for.

Here is an example of CSV from the Wikipedia page on CSV:

Year,Make,Model,Description,Price 1997,Ford,E350,"ac, abs, moon",3000.00 1999,Chevy,"Venture ""Extended Edition""","",4900.00 1999,Chevy,"Venture ""Extended Edition, Very Large""",,5000.00 1996,Jeep,Grand Cherokee,"MUST SELL! air, moon roof, loaded",4799.00

What we can see is that a CSV document consists of a number of rows:

csv: row*.

Each row consists of a number of values, comma separated, followed by a newline:

row: v**",", nl.

But we don't want the commas in the output, since they aren't part of the data:

row: v**-",", nl.

A newline is a newline character, possibly preceded by a carriage return; we want neither of these in the output:

-nl: -#d?, -#a.

A basic value consists of any number of characters as long as they aren't a comma, or a newline:

v: ~[","; #a; #d]*.

However, it is possible to include commas and newlines, as long as the value is quoted. So we change the definition of v to:

v: quoted; unquoted.

An unquoted value is either empty, or is the same as the old definition of v, except that the first character can't be a quote (or comma, or newline):

-unquoted: ; ~['",'; #a; #d], ~[","; #a; #d]*.

(Quoted values are the exercise)

Using the above example csv from the wikipedia page (note values with commas, quotes, and newlines) as input, we get this result:

<csv>

<row>

<v>Year</v>

<v>Make</v>

<v>Model</v>

<v>Description</v>

<v>Price</v>

</row>

<row>

<v>1997</v>

<v>Ford</v>

<v>E350</v>

<v>ac, abs, moon</v>

<v>3000.00</v>

</row>

<row>

<v>1999</v>

<v>Chevy</v>

<v>Venture "Extended Edition"</v>

<v/>

<v>4900.00</v>

</row>

<row>

<v>1999</v>

<v>Chevy</v>

<v>Venture "Extended Edition, Very Large"</v>

<v/>

<v>5000.00</v>

</row>

<row>

<v>1996</v>

<v>Jeep</v>

<v>Grand Cherokee</v>

<v>MUST SELL!

air, moon roof, loaded</v>

<v>4799.00</v>

</row>

</csv>

As an extra simple example, if we feed an empty file to the ixml, we get:

<csv/>

Here's the grammar collected:

csv: row*. row: v**-",", nl. v: quoted; unquoted. -nl: -#d?, -#a. -unquoted: ; ~['",'; #a; #d], ~[","; #a; #d]*.

Define "quoted", and check you get the same output as above.

The hardest part of getting an article into Docbook format (the XML format used by several conferences for their papers) is getting the bibliography right. Although ixml was not designed to produce particular versions of XML, it is possible to produce a Docbook bibliography with the help of ixml. For instance, the text for the ixml specification:

[spec] Steven Pemberton (ed.), Invisible XML Specification, invisiblexml.org, 2022, https://invisiblexml.org/ixmlspecification.html

was processed by an ixml grammar whose top-level rules were something like

bibliography: biblioentry+.

biblioentry: abbrev, (author; editor), -", ", title, -", ",

publisher, -", ", pubdate, -", ",

(artpagenums, -", ")?, (bibliomisc; biblioid)**-", ", -#a.

It is a fairly fixed format, field separators are a comma and a space.

It is largely a permissive grammar: many fields are defined as any string of characters not containing a comma, close square bracket, or newline:

title: entry. publisher: entry. -entry: ~[",]"; #a]+.

Optional fields are identified by particular substrings. For example

artpagenums start with pp:

artpagenums: -"pp ", [Nd; "-–"]+.

A bibliomisc is a web address, beginning http

A bibliod is either an ISBN, beginning with ISBN,

or a DOI, beginning doi:.

[spec] Steven Pemberton (ed.), Invisible XML Specification, invisiblexml.org, 2022, https://invisiblexml.org/ixmlspecification.html

gives

<biblioentry>

<abbrev>spec</abbrev>

<editor>

<personname>

<firstname>Steven</firstname>

<surname>Pemberton</surname>

</personname>

</editor>

<title>Invisible XML Specification</title>

<publisher>invisiblexml.org</publisher>

<pubdate>2022</pubdate>

<bibliomisc>

<link xlink-href='https://invisiblexml.org/ixml-specification.html'/>

</bibliomisc>

</biblioentry>

which can then further be tweaked by hand.

Similar to CSV: fields are comma separated, though their content is determined either by their position, or some substring in the content.

Some fields however could in principle contain commas, in particular the title field. We can solve this in a similar way to CSV, by allowing fields to be surrounded by quotes.

Change this ixml so that at least the title field may contain commas. Here is some data to try it on:

[ixml] Steven Pemberton, Invisible XML, Proceedings of Balisage: The Markup Conference 2013 Balisage Series on Markup Technologies vol. 10, 2013, doi:10.4242/BalisageVol10.Pemberton01 [spec] Steven Pemberton (ed.), Invisible XML Specification, invisiblexml.org, 2022, https://invisiblexml.org/ixmlspecification.html [ixml2] Steven Pemberton, On the Specification of Invisible XML, Proc. XML Prague 2019, 2019, pp 413-430, https://archive.xmlprague.cz/2019/files/xmlprague-2019-proceedings.pdf#page=425 [xf] Steven Pemberton, """Form"", and Content", Proc. XML Prague 2018, 2018, pp 213-228, https://archive.xmlprague.cz/2018/files/xmlprague-2018-proceedings.pdf#page=225

Gedcom is a (non-context-free) format for recording geneological data (family trees). To be honest, it is a fairly badly-designed format, that looks like it was designed by a programmer used to assembly language. Here is an example of an entry from such a file.

The leading numbers give the nesting of the field, followed by the 3 or 4-letter name of the field, and then the value of that field, if any. Since it is not context-free, we are constrained in the range of solutions for this, but we can still make something that is more structured.

0 @I1@ INDI 1 NAME Robert Eugene /Williams/ 2 SURN Williams 2 GIVN Robert Eugene 1 SEX M 1 BIRT 2 DATE 2 Oct 1822 2 PLAC Weston, Madison, Connecticut, United States of America 2 SOUR @S1@ 3 PAGE Sec. 2, p. 45 1 DEAT 2 DATE 14 Apr 1905 2 PLAC Stamford, Fairfield, Connecticut, United States of America 1 BURI 2 PLAC Spring Hill Cemetery, Stamford, Fairfield, Connecticut, United States of America 1 FAMS @F1@ 1 FAMS @F2@ 1 RESI 2 DATE from 1900 to 1905

At the top level, we have a record numbered zero, followed by some number of nested fields numbered 1:

gedcom: record*. record: -"0 ", field, r1*.

Similarly, a record numbered 1 is going to have its value, followed by any number of records numbered 2:

r1: -"1 ", field, r2*.

and so on, as far we need to go:

r2: -"2 ", field, r3*. r3: -"3 ", field, r4*. r4: -"4 ", field, r5*. r5: -"5 ", field, r6*. r6: -"6 ", field, r7*. r7: -"7 ", field, r8*. r8: -"8 ", field, r9*. r9: -"9 ", field.

Keeping it simple now, a field is a name and an optional value:

-field: name, (-" ", value)?, -#a. @name: ["A"-"Z"; "0"-"9"; "@"]+. @value: ~[#a]*.

This already gives a reasonably structured result:

<gedcom>

<record name='@I1@' value='INDI'>

<r1 name='NAME' value='Robert Eugene /Williams/'>

<r2 name='SURN' value='Williams'/>

<r2 name='GIVN' value='Robert Eugene'/>

</r1>

<r1 name='SEX' value='M'/>

<r1 name='BIRT'>

<r2 name='DATE' value='2 Oct 1822'/>

<r2 name='PLAC' value='Weston, Madison, Connecticut, United States of America'/>

<r2 name='SOUR' value='@S1@'>

<r3 name='PAGE' value='Sec. 2, p. 45'/>

</r2>

</r1>

<r1 name='DEAT'>

<r2 name='DATE' value='14 Apr 1905'/>

<r2 name='PLAC' value='Stamford, Fairfield, Connecticut, United States of America'/>

</r1>

<r1 name='BURI'>

<r2 name='PLAC' value='Spring Hill Cemetery, Stamford, Fairfield, Connecticut, United States of America'/>

</r1>

<r1 name='FAMS' value='@F1@'/>

<r1 name='FAMS' value='@F2@'/>

<r1 name='RESI' value=''>

<r2 name='DATE' value='from 1900 to 1905'/>

</r1>

</record>

</gedcom>

One thing that this exposes is that the structure isn't always completely

consistent. For instance, the RESI field at the end of the example

has an empty value attribute because the name is followed by a

space, but no value.

Fix the above problem, so that empty values don't appear in the result.

Actually, the structure of a top-level element can be different: if it

starts with something like @I1@, this is an identifier, and in

other records, a field value of that style is a reference to a record with that

identifier. Your job is to make the identifiers on top-level records into

id attributes, like:

<record id='I1' name='INDI'>

and make uses of such values as link attributes, for instance

in the above example:

<r1 name='FAMS' link='F1'/>

Here is a larger example of a gedcom file for you to run on: gedcom.

Markdown is a class of languages meant to make writing HTML text documents easier. Unfortunately, there are several different versions, and not all of them have specifications.

Since we'll only touch on some of the features here, we'll take the liberty of not using any particular version of Markdown.

To show it working, this chapter in the tutorial was actually produced using the ixml below on a Marked-down version of the chapter.

Since Markdown is just a representation of an HTML document, the top level is:

html: head, body.

We can add anything we like to the head using insertions:

head: meta, title. meta: name, content. @name: +"generator". @content: +"ixml". title: +"Markdown".

This will cause every serialised result to start

<html>

<head>

<meta name='generator' content='ixml'/>

<title>Markdown</title>

</head>

<body>

For the time being, let's keep the body simple: headings and paragraphs separated by blank lines:

body: part++(-#a+). -part: heading; para. -heading: h1; h2; h3; h4; h5; h6. -para: p.

Headings are a line starting with # characters, and optionally

ending with them as well.

h1: -"# " , htext, -"#"*, -#a. h2: -"## ", htext, -"#"*, -#a. h3: -"### ", htext, -"#"*, -#a. h4: -"#### ", htext, -"#"*, -#a. h5: -"##### ", htext, -"#"*, -#a. h6: -"###### ", htext, -"#"*, -#a.

Heading text is a series of heading characters. Hash characters are only allowed in the text if they are followed by a non-hash character:

-htext: hc+. -hc: ~["#"; #a]; "#", ~["#"; #a].

Paragraphs mustn't start with a hash, clearly, and consist of a number of lines of text:

p: ~["#"], line++nl, -#a. -line: c+. -c: ~[#a].

We have separated out the nl, since it should be retained in

the output, and not deleted.

-nl: #a.

Text within a paragraph can be marked with *, _,

or ` to produce strong or emphasised or

code. So we change the definition of the contents of

line accordingly:

-line: c+.

-c: ~[#a; "*_`"]; em; strong; code.

strong: -"**", cstar+, -"**";

-"__", cunder+, -"__".

em: -"*", ~["*"], cstar+, ~["*"], -"*";

-"_", cunder+ , -"_".

code: -"`", ccode+, -"`".

-cstar: ~["*"; #a].

-cunder: ~["_"; #a].

-ccode: ~["`"; #a].

Finally, we will add one more type of paragraph, code blocks, which will be

produced using the pre element in HTML, and start with a space in

the input. So add the new type of paragraph:

-para: p; pre.

Make sure that p paragraphs don't start with a space:

p: ~["# "], line++nl, -#a.

and now define a pre paragraph similar to

p paragraphs:

pre: (" ", preline)++nl, -#a.

-preline: ~[#a]*.

Take the ixml code collected

above, and add a horizontal rule element, that consists of a line starting

with three or more hyphens. (Don't forget to make sure that p

paragraphs consequently don't start with a hyphen).

Add a hypertext a element, of the form

[text of the link](link)

for example

[the ixml code collected above](../examples/markdown.ixml)

This can go anywhere in the body of a p paragraph.

The final case study is the grammar of ixml itself. This grammar has the following properties:

alts in rule), the marks

for a rule are on the definition and are not overridden.At the top level is the rule for rule:

rule: (mark, s)?, name, s, -["=:"], s, -alts, -".".

This rule defines itself: a rule is an optional mark, followed by a name, a colon or equals, some alternatives, and then a full-stop.

Alts are just one or more alts separated by

semicolons or vertical bars, an alt is zero or more

terms, separated by commas:

alts: alt++(-[";|"], s). alt: term**(-",", s).

The rule s is for optional whitespace.

-s: (whitespace; comment)*.

What you will see is that its use always directly follows a terminal (such

as "-["=:"], s" above in rule), except if

that terminal is in an attribute, in which case the whitespace is moved to

directly follow the attribute (such as for mark, and

name above), in order to prevent comments ending up in attribute

content.

Whitespace is any character so classified in Unicode, plus tab, carriage-return, and linefeed:

-whitespace: -[Zs]; tab; lf; cr.

A comment is any number of comment characters or comments, surrounded by { } braces. A comment character is any character that isn't one of the braces. This definition allows nested comments, so that you can comment out a piece of ixml:

comment: -"{", (cchar; comment)*, -"}".

-cchar: ~["{}"].

Most rules are self-explanatory. But there a couple worth looking at.

A string is one or more dchars enclosed by double

quotes (and similar for single quotes).

@string: -'"', dchar+, -'"';

-"'", schar+, -"'".

A dchar is any character except a double quote or a newline

(since strings may not extend over lines), or two double quotes:

dchar: ~['"'; #a; #d];

'"', -'"'.

Note though that since two double quotes represent a single quote in the string, one is deleted, and the other is not.

A literal is either a string or a hex encoding:

literal: quoted;

encoded.

-quoted: (tmark, s)?, string, s.

-encoded: (tmark, s)?, -"#", hex, s.

Since string and hex are both attributes, they

appear as a literal element in the serialisation, and the

attributes are raised up to it. For "a":

<literal string='a'/>

for #a

<literal hex='a'/>

If they have a mark, it will similarly appear here. For instance for

-#a:

<literal tmark='-' hex='a'/>

Hex encodings are treated slightly differently in character sets, which appear in the serialisation as either an inclusion or an exclusion, containing a series of members:

["a"; #a; "A"-"Z"; #a-"a"]

appears as:

<inclusion> <member string='a'/> <member hex='a'/> <member from='A' to='Z'/> <member from='#a' to='a'/> </inclusion>

That is to say, for a range, there aren't separate attributes for a

string from and a hex from: they are both called

from, and they are distinguished by whether they contain one

character, or two where the first is a '#', so the '#' in that one case is not

deleted.

Change the definition for ranges so that there are different attributes for ranges. So the above would appear as

<inclusion> <member string='a'/> <member hex='a'/> <member from='A' to='Z'/> <member fromhex='a' to='a'/> </inclusion>